NSF Awards Database Grant to Improve Efficiency of Sensor Data Analytics

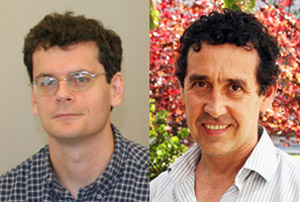

San Diego, Calif., Sept. 3, 2014 — September 1 was the start date of an important new project for two faculty experts in databases and machine learning at the University of California, San Diego. Computer Science and Engineering (CSE) professor Yannis Papakonstantinou in the Jacobs School of Engineering is principal investigator on a $1.1 million new project funded by the National Science Foundation (NSF) to build Plato, a model-based database for compressed, spatiotemporal sensor data. Co-principal investigator on the project is CSE professor Yoav Freund. Both are academic participants in Calit2's Qualcomm Institute.

Analytics for sensor data are not as productive as tools for non-sensor business intelligence platforms. The reason? Database technology and sensor data processing currently don't mix, at least not very well, in part because SQL databases of data collected over time and space often fall short due to the lack of critical real-world models (abstractions) that capture the stochastic processes which generate measurements. This is particularly true when dealing with many types of sensor data, or when mixing sensor data with metadata from conventional databases, or when many different types of analysis are required. Furthermore, anyone handling this type of data must be simultaneously an expert in signal processing, statistics, and the management of big data.

The Plato system will allow analysts to develop quickly declarative queries that can be automatically optimized. "By doing so, the project will deliver the envisioned productivity gains," says Papakonstantinou. "Plato will also lower the technical sophistication required of users, therefore enabling many scientists and domain specialists to work with sensor-data analytics." While Papakonstantinou focuses on designing a model-aware data model and query language features that combine conventional SQL querying with statistical signal processing, co-PI Yoav Freund will develop learning algorithms that learn the model components of reduced-noise, additive model representations. Other algorithmic work will involve query processing directly on compressed representations rather than the original data, and semi-automated algorithms to further compress the model representations in light of dependencies between the models.

The researchers are also planning to use the CSE-built UC San Diego Energy Dashboard and the Qualcomm Institute-based Data E-platform Leveraged for Patient Empowerment and Population Health Improvement (DELPHI) as primary use cases for the new database system as it develops. The Dashboard allows the public to look at energy use across the UC San Diego campus, based on continuous, 24/7 building-level and in some cases (notably in the CSE building) office-level energy consumption data. The Energy Dashboard and DELPHI will be stand-ins for the large-scale, statistical sensor data processing challenges that the Plato model is designed to overcome. In the case of DELPHI, sensors capture the full range of data – at the personal, medical, environmental and population levels – that are essential to promote health, prevent disease and improve medical care. Professor Papakonstantinou is a co-PI on the DELPHI project, which is led by Kevin Patrick, a professor of family and preventive medicine in UC San Diego’s School of Medicine, and director of the Qualcomm Institute’s Center for Wireless and Population Health Systems.

For metrics, the Plato exercise will eventually measure both the lines of code as well as the runtime efficiency of performing analytics on the Dashboard's energy sensor data and DELPHI's personalized population health data

Related Links

Media Contacts

Doug Ramsey, (858) 822-5825, dramsey@ucsd.edu