SURF-IT Project Analyzes Motion-Capture Data

By Anna Lynn Spitzer

|

August 2, 2007 – An innovative motion-capture system highlighted Calit2@UCI’s Summer Undergraduate Research Fellowship in Information Technology (SURF-IT) lunchtime presentation last week.

And in a twist on the usual format, after learning about the system's features, the audience was able to experience it first-hand.

John Crawford, assistant professor of arts, and SURF-IT fellow Quin Kennedy discussed their research on the interactive media system called Active Space. The system, which incorporates video-based motion tracking and capture, as well as a host of other features, is used to integrate digital media art into interdisciplinary dance, theatre, music and media installations. Using cameras, computers and sophisticated software, the space continually senses, measures and responds to its occupants’ movements, allowing performers to influence and interact with the technology, in essence to "play the space" as an instrument.

In last week’s presentation, Crawford, who began developing Active Space in 1994, familiarized attendees with the features of the system, which resides in the Embodied Media + Performance Technology Lab (emptLab) in the Calit2 Building.

He explained that motion tracking and capture, first used in biomechanical sciences, now has multiple applications. In addition to performance art, the technology is used in video games, golf lessons and virtual training exercises.

|

||||

Multiple cameras capture individual body movements and sophisticated software translates them into individual data points in space. Crawford showed the audience a depiction of a dance video in which 35 markers – represented by dots in space – formed a type of skeleton that moved like the dancer. “You can see essential features of the movement,” he said, adding that this information could be mapped onto a three-dimensional model of a human being.

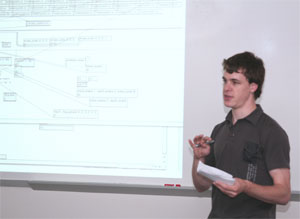

Kennedy’s SURF-IT assignment is to design and build a new software system that adds innovative features and capabilities to the Active Space. He is analyzing existing motion-capture “marker data” that indicates how movement happens in a space. Is the participant moving side to side? Is he swaying his hips or doing lunges?

Using the Max/MSP/Jitter programming environment, which translates motion into numbers on x, y, and z axes, Kennedy calculates different nuances of the movement represented by the data. Ultimately, he hopes to move away from using a skeletal representation of motion and create a way to use a more mathematically-based approach to analyze motion-capture data.

|

The data can be utilized to give dancers important feedback on their movements that can’t be discerned in other ways. In addition, the data can be used to chart historical movement in the kinesphere (personal space), said Crawford. “There are many changes that occur over time,” he explained. “We move our bodies differently at age 20 than we do at age 40.”

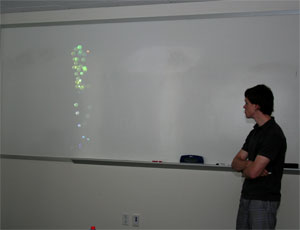

At the conclusion of the talk, audience members visited the emptLab for their own interaction with Active Space. They moved across the room at various speeds, watching as the cameras and computers mathematically plotted their movements on a large screen.