A Musically Creative Machine?

San Diego, CA, March 23, 2008 -- When French composer Georges Bloch visited his alma mater UC San Diego and held a workshop at Calit2, the event morphed from a seminar into a free-wheeling, improvisational musical performance by a human performer -- and a computer.

|

On March 11, UCSD alumnus and composer Georges Bloch took over the Calit2 Theater at the invitation of UCSD music professor Shlomo Dubnov, an active participant in Calit2.

A former student of composition and computer music in the UCSD Department of Music, Bloch is based at France's interdisciplinary center for music, IRCAM, and teaches at the University of Strasbourg. Together with performer and improviser Mark Dresser, Bloch performed a musical improvisation where a computer system was learning on the fly and responding to musical ideas that were presented to it.

Unlike other live computer-music works were computer responses are processed versions of its input, Bloch was demonstrating how actual variations were being created by the machine in the style of the live musician.

The computer's actions were based on research into style modeling and machine learning by UCSD's Dubnov and Gerard Assayag of France's IRCAM. The machine analyzed the redundancies and repeating patterns in the musical material based on its melodic and spectral contents.

Using pattern-matching algorithms, the music was turned into a graph that represented new, stylistically valid recombination possibilities. This representation, serving as a dynamic memory for the improvising machine, was later used by the computer to create new music variations.

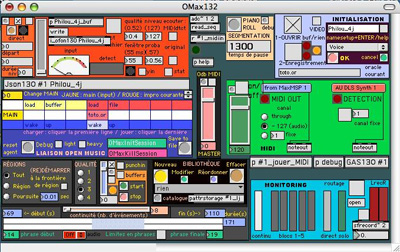

Bloch noted that these concepts in the musical improvisation were realized in the OMax software developed by Assayag, Bloch, Chemillier and Dubnov. OMax is a software environment that can learn in real-time typical features of a musician's style and that can play along with him interactively, giving the flavor of a machine co-improvisation. OMax is proliferating among improvisers and the software tool is now widely used as a computer creativity aid in composition.

The workshop was titled "Music and Film Recombination by Machine," and in a video extension developed by Georges Bloch, the image of the performer was re-edited on the fly using similar principles.

In the discussions the emerged during and after the workshop, various questions were raised by the participants about the role of anticipation in musical meaning, design of musical structure in composed improvisations, and establishing a dialogue between man and machine in creative information-technology applications.

The collaborative project is supported through Calit2, UCSD Department of Music, IRCAM, and France's Centre Nationale de la Recherche Scientifique (CNRS).

Media Contacts

Doug Ramsey, 858-822-5825, dramsey@ucsd.edu

Related Links

The OMax Project