Tomography Researchers 'Stitch Together' Collaborations to Help Solve Problems in the Field

By Tiffany Fox, (858) 246-0353, tfox@ucsd.edu

San Diego, CA, Aug. 4, 2008 -- From the way Rick Lawrence describes it, creating a tomographical image of a tiny biological structure sounds a lot like trying to weigh and measure a hibernating grizzly bear.

|

"The idea is to get a sense of the structure of the object without disturbing it," Lawrence says. "That in itself is a very interesting problem."

Fortunately, every problem has its solution. Bringing those solutions to light was Lawrence's intention in organizing "Tomography Day," an inaugural meeting-of-the-minds held in July at the UC San Diego division of the California Institute for Telecommunications and Information Technology (Calit2).

Lawrence, a research scientist for the National Center for Microscopy and Imaging Research (NCMIR), organized the event in connection with the 2008 Society for Industrial and Applied Mathematics (SIAM) Annual Meeting, which was held the same week at San Diego's Town and Country Resort. His idea was to bring together experts in various fields related to tomography to acquaint them with electron microscope tomography research and discuss problems inherent to instrumentation and data collection. In particular, Lawrence was interested in discussing ways the conventional algorithms for tomography research might be improved or modified for application to electron microscopy.

Tomography is used in numerous sciences, including archaeology and geophysics, but is most often thought of in the context of medical imaging. The word is derived from the Greek word tomos, which means "a section" or "a cutting," and modern variations of tomography involve using some sort of imaging technique to gather data about an object from multiple angles, and then feeding that data into a tomographic reconstruction software algorithm processed by a computer. Physical phenomena from x-rays, for example, can be used to create a Computed Tomography (CT or "CAT") scan, and phenomena from nuclear magnetic resonance can be used to create an MRI.

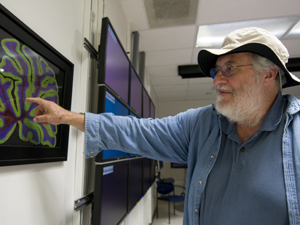

Scientists can also create tomographical images through the use of microscopes. Mark Ellisman, director of the Center for Research in Biological Systems (CRBS), which oversees NCMIR, has research roots in neurophysiology, or the study of the nervous system. One of his research projects involves studying the neurological system from a "many-scaled perspective": that is, from the tiny organelles within a single brain cell all the way up to the anatomy of the nervous system itself.

To get the full picture and create detailed tomographical images, Ellisman uses both a light microscope and an electron microscope to take "snapshots" of the biological structures from various angles and create what amounts to a "panoramic view."

|

"In essence, this process works very well," Lawrence says, "but the mathematical problem is that to build up a complete picture, you have to take an infinite number of photos. The fewer you take the more inaccurate the ultimate reconstruction is."

Not only that, both light microscopes and electron microscopes have their own inherent limitations. Electron microscopes, which use electrons to illuminate a specimen and create an enlarged image, have much greater resolving power than light microscopes and can obtain much higher magnifications. Some electron microscopes can magnify specimens up to 2 million times, while the best light microscopes are limited to magnifications of 2,000 times.

On the other hand, electron microscopes can only magnify very limited areas and require a scientist to take many painstaking samples of an image and then stitch them together to render the image from a broader perspective.

To complicate things further, the algorithms for electron microscope tomography were developed from x-ray tomography, mainly because x-ray tomography is the better-endowed and more highly-researched field.

"With electron microscope tomography, people assumed that because the instrumentation was like an x-ray, the algorithms would work just like with x-rays," says Lawrence, who works with Ellisman at CRBS. "That's where I came into the picture. I noticed that as the tomographical image reconstructions from electron microscopes were getting bigger, they were also getting qualitatively worse and worse. The textbook math used to explain the process wasn't providing any hints at all."

Lawrence, who comes from a branch of abstract mathematics called algebraic topology, decided it was time to start from scratch and look at the fundamentals of the approach.

"I started measuring objects and noticed the algorithmic model the researchers were using for electron microscopes didn't fit the way the images looked," he says. "People tend to use electron microscopes as if they were light microscopes, but electron microscopes use magnetic lenses. In a magnetic field, the electrons travel in a curving path, so as you change direction and gather data from a different angle, the objects in the image move differently than what is predicted by the x-ray model. So the basic assumption was untrue."

Lawrence says the resulting distortion would be concealed with smaller-format electron microscope lenses, "but once you try to take bigger images, you're getting outside of the domain engineers can control and weird things start happening. We end up having to infer the complicated path of the electrons through the object we're trying to tease apart."

Even weirder things happen when the researchers attempt to "stitch" together the tomographical images, Lawrence says.

|

"On the boundaries of the stitching, things tend to look kind of nasty. The electrons going through one image have different paths from the electrons going through other images, even though they are traversing the same part of the object. You can't untangle that in two-dimensional images — you need images from many different directions. What we we'd like to do is take all of that three-dimensional information and modify the images so that they stitch together correctly."

The challenge, therefore, is to reconfigure the procedures and algorithms for electron microscopes so they work with particles that travel in curving paths, thereby allowing for more seamless stitching and a more cohesive image.

Enter Tomography Day. Lawrence says the meeting — which was co-staged by the National Biomedical Computation Resource (NBCR), headed by UCSD's Peter Arzberger — created enormous and geographically widespread enthusiasm for research into improving electron microscope tomography, and also set the stage for future experimentation in other areas of the field.

"In my enthusiasm I organized a 12-hour conference, and everyone stayed for almost the whole time. We got to the point where we were talking about using electron microscopes in very large format and stitching together montages with millions of pixels. The really neat thing about it was there were a large number of grad students who came to the meeting. People now have a better feeling for how their interests connect with other people's work. I think several research collaborations were established that day."

Groups at Caltech and Stanford universities, for example, are looking at segmentation within tomography, which entails the tedious process of finding and isolating certain structures within an object. Their teams would benefit from other researchers' work in artificial intelligence, Lawrence says, because "rather than wasting a grad student's time on doing such a mundane task, we can automate that process so people can get faster results."

Similarly, both the segmentation and artificial intelligence researchers would benefit from work being done in lambda tomography, where the boundaries of objects are isolated.

"That has an immediate connection to problems in artificial intelligence," Lawrence says. "If you can reconstruct an image so that the main thing you see is sharp boundaries, then the job of segmentation becomes that much easier."

Adds Lawrence: "I really would like to repeat Tomography Day. The question is: Can we make it a little more formal, make it an ongoing thing? We also need to decide what kind of projects we can organize — if we can make this an educational process for grad students or help with service the NBCR provides."

"I would also like to establish some sort of online data and software exchange, a 'complete package' where people could visit the site, learn about what other people are doing and try other people's experimental programs on their own data. We not only would have some central place where people can get software, but also share a common data format," says Lawrence. "Ninety percent of the problems in this type of work — such as duplicated coding efforts and decoding data from other sites — results from a lack of communication."

Media Contacts

Tiffany Fox, (858) 246-0353, tfox@ucsd.edu

Related Links

National Center for Microscopy and Imaging Research

Society for Industrial and Applied Mathematics

Center for Research in Biological Systems

National Biomedical Computation Resource