Qualcomm Institute Gallery Harvests Art from Noise of 3D Laser Scanning

'Autonomous Sensing ScanLAB' exhibition opens April 16, runs through June 12, 2015

San Diego, April 9, 2015 — An upcoming exhibition at the University of California, San Diego’s Qualcomm Institute will showcase art works derived from large-scale laser scans of buildings, landscapes and the environment. Autonomous Sensing opens April 16 in Atkinson Hall’s gallery@calit2, with a 5pm panel discussion with speakers Thomas Pearce and Matthew Shaw from the UK-based ScanLAB, whose 3D LIDAR scans form the basis of the art works on display. The panel will be followed by a reception and official opening to the public.

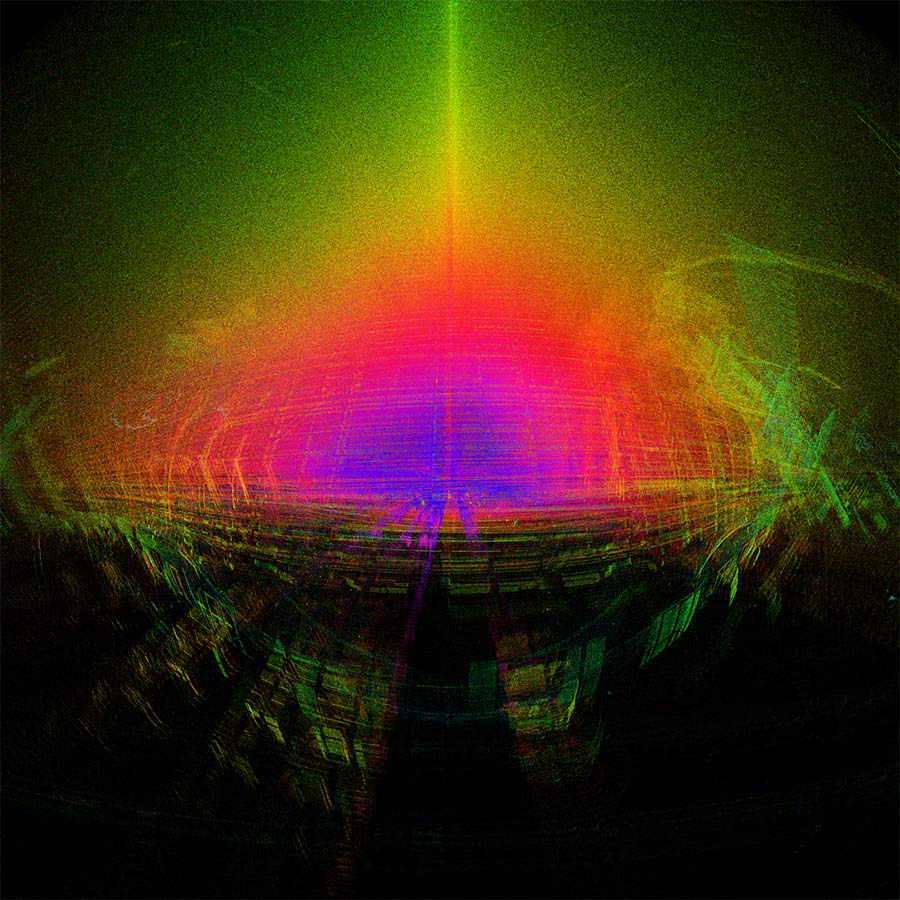

Curated by Ryan Bishop, Benjamin Bratton, Jordan Crandall, Edward Keller and Jussi Parikka, Autonomous Sensing involves three primary components. One is the video installation, “Noise: Error in the Void,” produced by ScanLAB out of a single, unedited scan of Berlin’s Oberbaum Bridge captured one cold, overcast afternoon in November 2013. But instead of showing only the seemingly perfect final digital reconstruction of the scene made possible by modern scanning technology, the installation shows the process as the LIDAR camera “journeys through the droning spheres of error and cataclysmic arrays of inaccurate points.”

Also on display: visual works developed in collaboration with UC San Diego students in a workshop staged by ScanLAB in the Qualcomm Institute in the days immediately prior to the exhibition opening. The students will show animated and still point-cloud “speculative mappings” of southern California’s border conditions in which the terrestrial laser scanning technology is (mis)used to smuggle geometries, collapse point clouds and implode topographies across legislative and corporate boundaries. Remote-sensing technologies are increasingly reinforcing these southern Californian boundary conditions, forming a sentient infrastructure that monitors every slightest disturbance in the flow of materials and people. Limited not by fences or drones but by its own limited scanning range of 330 meters, the LIDAR scanner’s laser beam travels across borders to harvest otherwise inaccessible topographies.

The third component of Autonomous Sensing will be a reader titled Key Topoi, which situates the current exhibition in the broader context of theoretical inquiry around the conjunction of machine sensing and natural sensation. This conjunction emerges from combinations of different techniques: energy harvesting from artificial leaves; epidermal microelectronics; embedded sensors, including nanoscale chemical sensing; machine vision; deep and universal addressing protocols, and more -- all supported by truly ubiquitous, networked computation. It leads toward unprecedented capabilities for knowing and experiencing the world.

Indeed, autonomous sensing is more than just a platform for the production of information: it implies a radically pervasive distribution of touch, communication, and intelligence into the fabric of the world itself. Material surfaces sensing the world blend functionally with skin touching things in the world. Information and experience are shared, ways of knowing combined with ways of doing. Agency is located throughout expanded networks of sensing and sensation. Within these landscapes, autonomy is always relative and relational: it is a function of the material technologies that now enable the blending of organic and inorganic sensing, from molecular scale to landscape scale and back again. The project has provocative implications for art and design, computer science and engineering, as well as ecological monitoring, cognitive science and philosophy.

ScanLAB

The London, UK-based studio explores the inherent mistakes made by modern technologies of vision. The unedited view of the world is glimpsed as seen through the eyes of the LIDAR machine. Using LIDAR technology it is possible to capture the world in three dimensions -- creating near-perfect digital 3D replicas of buildings, landscapes, objects and events. However, these digital replicas are always an illusion of perfection. Every technology, despite its promise of accuracy and flawlessness, comes with its own integral accident, its own built-in failure. ScanLAB has long pursued an interest in this failure, the so-called “noise” of 3D scanning. Though it is normally elaborately filtered out of the point cloud, we see in this noise a subversive potential as it deviates from the material world it captures, a deviation that opens up alternate realities, which have the ability to challenge the status quo rather than being instrumentalized to maintain it. Reality is shrouded in a cloud of mistaken measurements, confused surfaces and misplaced, three-dimensional reflections.

ScanLAB's video installation, “Noise: Error in the Void,” takes viewers around Berlin’s Oberbaum Bridge as seen through the moment-to-moment noise of the capture process. The city surface is multiplied and reflected. Echoes of bridge and façade are manifested out of place. A column of overlapping points burns overhead. An axis of stainless steel railing, water and glass slice through. Fenestration bursts and loops. Infrared wavelengths dictate distance. The noise here is mapped along a grid, aligned and perfectly wrong. The scan sees more than is possible for it to see. The noise is draped in the colors of the sky. The clouds are scanned, even though out of range. Everything is flat, broken only by runway markings. Traces of dog walkers spike up into the cloud. The ground falls away to the foreground in ripples. The horizon line is muddy with the tones of the ground and the texture of the sky. The center is thick with points, too dense to see through. Underground only the strongest noise remains.

Media Contacts

Doug Ramsey, (858) 822-5825, dramsey@ucsd.edu

Related Links

Gallery@calit2

ScanLAB Projects